Can Mainstream Graphics be Accessible?

Introduction

People with print disabilities presently have good access to well-formed electronic text using an audio and/or braille screen reader. Math is accessible in principle, and good progress is being made to make it accessible in practice (Gardner, Soiffer, & Suzuki, 2006; Gardner 2012; Leas, Persoon, Soiffer, & Zadcherl, 2008; Soiffer 2005) when math is expressed in the math markup language MathML. The last frontier in accessibility is graphics. This article briefly discusses the three ways that graphical information is “made accessible” today and the possibility that mainstream graphics could someday be as directly accessible as text and math.

Making Graphics Accessible with a Word Description

The DIAGRAM Project, funded by the Department of Education to make figures in K-12 textbooks accessible, has commissioned a number of studies to determine the best methods for making textbook graphics accessible. One project is development of a decision tree (http://diagramcenter.org/research/56-decision-tree.html) for deciding when a word description is or is not adequate. One finding of that project is that the great majority of graphical information in textbooks can be made accessible with a good word description. This is true for most lower level texts but less so for advanced textbooks in math and science. Even in lower level texts there are things like maps in history and geography texts that are only marginally useful with a word description. Many graphs and diagrams in business and science articles and in most professional literature are also not sufficiently understandable with a word description alone. Such figures need to be explored with fingers to understand them fully.

Making Graphics Accessible with a Tactile Copy

The Braille Authority of North America (BANA) (http://brailleauthority.org/) and the Canadian Braille Authority (http://www.canadianbrailleauthority.ca/home) have jointly developed a set of guidelines and standards for tactile graphics (http://brailleauthority.org/tg/web-manual/). These standards define spacings, line intensities, symbols, etc. A word description is also required to explain the graphic, since fingers are much less capable of grasping the context of a figure than eyes. Tactile graphics meeting these standards can only be made by a trained tactile graphics transcriber and are consequently quite expensive.

Unfortunately few people who need to access graphical information can read these tactile graphics. I have observed that people who are not expert braille readers do not even attempt to read a tactile graphic and that only a small fraction of braille readers can read them. I am unaware of any reliable statistics on either the percentage of blind people who read braille (variously estimated to be 10 to 20%) or the percentage of those who read tactile graphics. Two tactile graphics experts (Hastie, Lucia, private communication, Nov 27, 2013; Dietrich, Gaeir, private communication, Nov 27, 2013) agree with my guess that no more than 10-25% of braille readers can read a tactile diagram. These circumstances have led to a widespread conviction that word descriptions provide the best access to graphical information, even for such cases where the word description is clearly inadequate. The unfortunate consequence is a world in which people with print disabilities are excluded from adequate access to much graphical information.

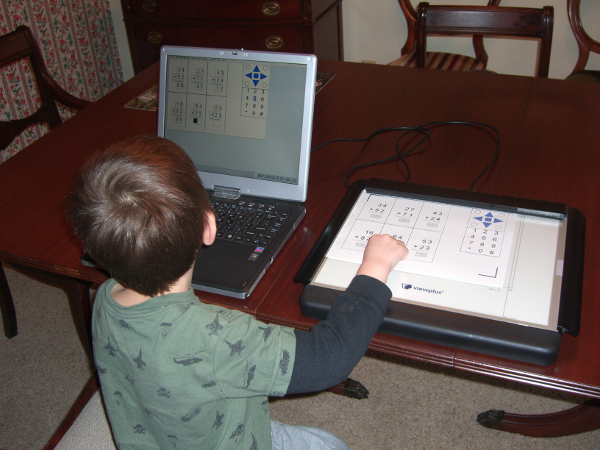

Making Graphics Accessible with an Audio-Tactile Graphic

The difficulty of reading a tactile graphic is greatly reduced if the graphic can explain itself to the reader. Instead of requiring the user to read a braille description and braille labels, an audio tactile graphic can speak that information. In fact it can, in principle, describe the graphic in several ways selectable by the user, speak text labels, and speak the names of important graphical objects (usually when selected on screen with a mouse or by touching, pressing, or tapping that object on a tactile copy. Selecting it twice causes a longer description of the object to be spoken. Not only is access to this information much easier for most people than reading braille, but a great deal more information can be conveyed about the graphic than is feasible using a stand-alone tactile.

The audio-tactile technology requires that the tactile copy communicate with some computing device (a PC, smart phone, or note-taker for example). A touchpad or other hardware device tells the computer what has been selected so that the computer can speak the appropriate information. If the computing device has an online braille display attached, the audio information can equally well be displayed in braille. With the advent of small devices like smart phones and compact note-takers, and compact convenient devices such as electronic pens for communicating to that device, audio-tactile graphic access could become as convenient and straightforward as reading digital text with a screen reader.

Audio-tactile graphics is not a new development. The technique was first demonstrated by Professor Donald Parkes (1988, 1991). His Nomad tablet was introduced commercially by the American Printing House for the Blind a few years later but was expensive and clumsy, and it was eventually discontinued. Other organizations have used audio-tactile graphics for specialized applications (Landau, 2003; Loetzsch, 1994; Loetzsch, 1996). The Nomad experience stimulated me to undertake an intensive exploration of graphical accessibility (Barry, Gardner, & Lundquist, 1994) that convinced me that audio-tactile graphics was the best route to universally accessible mainstream digital graphics (Gardner et al, 1996; Gardner & Bulatov, 1998). I have directed ViewPlus’ pioneering development of audio-graphics software technology (Gardner & Bulatov, 2001; Gardner, 2002; Gardner & Bulatov, 2010; Sahyun, Gardner, & Gardner, 1998) and Tiger Tactile Graphics and Braille Embossers (Sahyun, Bulatov, Gardner, & Preddy, 1998; Sahyun, Gardner, & Gardner, 1998; Walsh & Gardner, 2001) that have made creation/conversion and reading audio-tactile graphics a practical and relatively inexpensive possibility.

Roadmap to Making Mainstream Digital Graphics Accessible

Smart figures

Academic publishers, in particular the American Physical Society, have collaborated with ViewPlus to explore the possibility that future publications can be published not only with fully accessible text and math but with accessible figures as well (Gardner, Bulatov, & Kelly, 2009). There is much interest in publishing “smart” graphics because they are valuable to everybody, not just to people with print disabilities. Information contained within the image file can become an essential part of the publication, can provide tutorial information about understanding the figure, and can make the figure much easier to classify for purposes of archiving and searching.

In addition, smart figures in many disciplines can be their own data archive. Most figures show only a few kilobytes of data, and it is entirely practical for the file to contain both the data and an image of the data, not just the latter. Having the data easily accessible within the image file is a boon to scientists who use it to compare with their own data or theoretical model predictions. That data can make the graphic much more accessible to people with print disabilities as well, because the data can be displayed through audio tones as a function of position, giving excellent access to multi-dimensional data plots that are presently very difficult for people with print disabilities to understand.

Authoring software requirements

In order to make smart figures a reality, software used for authoring graphical information needs to have a smart image option for saving files. Authors presently insert labels into data sets, fitting lines, objects in diagrams, etc. If I use semantically meaningful names, and these names are saved in the descriptive meta-data for those objects, the image can be automatically accessible. I can improve the accessibility by adding additional descriptive material, but for most graphical information, the automatically saved information can make that image quite accessible.

Generating good tactile copy

In order to be directly accessible as an audio-tactile, a smart figure must be printable to an embosser that can produce an acceptable tactile copy (or at some future date, be displayable as a useful haptic on-screen image). The smart figure can include a special tactile version that is sent to the embosser (or displayed on a haptic screen) instead of the visual image. In publications created carefully to be fully accessible, such special layers are a good option. For most mainstream publications, however, it is not feasible for authors or editors to include special layers, so software must be able to generate an acceptable tactile image directly from the visual image. Someday it might be possible to recognize a photograph and produce a useful tactile copy, as some researchers are already doing for facial images (http://www.nbcnews.com/id/41624232/41626743#.UsW-zbTEnKc), but at present, such automatic tactile generation is not really feasible. For the most part, however, photographs are usually sufficiently well described by words. Maps, graphs, and diagrams are the images for which words are often not enough. Fortunately it is feasible to create good tactile copy from this type of visual image.

When embossing an image with ViewPlus software and a ViewPlus embosser, the image size is scaled up automatically so that fingers can more easily distinguish details. The software automatically enlarges the image to maximum size permitted by the paper, and if desired, the user can zoom in and print various regions separately. Images emboss by default in a “tactile gray scale,” in which dark regions emboss with tall dots and light regions with small dots. Several important types of figure (line and block graphics, flow diagrams) produce excellent tactile copy without any processing. For many other image types (maps, pie, and bar charts), the default image may be adequate, but various user-selectable processing options can make the graphic more usable. These options include edge enhancement, elimination, intensity reduction of color regions, replacement of color by easily distinguishable patterns and intensities, etc. The word description and/or caption accompanying such images should be sufficient so that even a totally blind user can anticipate the most useful process. She should seldom need to reprint an image to obtain an acceptable tactile copy.

Conclusions

The underlying technology for accessing smart images by the audio-tactile method is proven. Within a few years, smart phones, tablets and other ubiquitous modern devices could make audio-tactile graphics access very inexpensive and user-friendly. If well-made smart figures become the norm for mainstream graphical information, people with print disabilities could have direct and excellent access. Presently audio-tactile access requires creating a tactile copy on an embosser. Haptic screen technology, now in its infancy, could develop eventually into ubiquitous high definition method for displaying tactile images that could give immediate excellent access.

References

Barry W., Gardner, J., & Lundquist R. (1994). Books for Blind Scientists: The Technology Requirements of Accessibility. Information Technology and Disabilities, 1(4). Retrieved from http://itd.athenpro.org/volume1/number4/article8.html

Bulatov, V., & Gardner, J. (1998). Visualization by People without Vision. Proceedings of the Workshop on Content Visualization and Intermediate Representations. Montreal, CA, 103–108.

Gardner, J., Bargi-Rangin, H., Lundquist, R., Barry, W., Preddy, M., Sahyun, S., & Salinas, N. (1996). New Methods of Reading, Writing, and Manipulating Information by People with Print Impairments. Proceedings of the Fifth International Conference on Computers Helping People with Special Needs. Linz, Austria

Gardner, J., & Bulatov, V. (1998). Non-Visual Access to Non-Textual Information through DotsPlus and Accessible VRML. Proceedings of the 15th IFIP World Computer Congress (Computers and Assistive Technology)., Vienna

Gardner, J., & Bulatov, V. (2001). Smart Figures, Svg, And Accessible Web Graphics. Proceedings of the 2001 CSUN International Conference on Technology and Persons with Disabilities. Los Angeles, CA

Gardner, J. (2002). Access by Blind Students and Professionals to Mainstream Math and Science. Proceedings of the 8th International Conference, ICCHP 2002. Linz, Austria.

Gardner, J., Soiffer., & Suzuki, M. (2006). Emerging Computer Technologies For Accessible Math. Proceedings of the 2006 International Conference on Technology and Persons with Disabilities. Los Angeles, CA. Retrieved from http://www.viewplus.com/about/abstracts/06csungardner.html

Gardner, J., Bulatov., & Kelly, R. (2009). Making Journals Accessible to the Visually Impaired – The Future is Near. Learned Publishing, 22(4) 314–319. Retrieved from http://www.viewplus.com/about/abstracts/09learnpubgardner.html

Gardner, J., & Bulatov, V. (2010). Highly Accessible Scientific Graphical Information through DAISY SVG. Proceedings of the 2010 SVGOpen Conference, Paris, France. Retrieved from http://www.svgopen.org/2010/papers/56-Highly_Accessible_Scientific_Graphical_Information_through_DAISY_SVG/index.html

Gardner, J. (2012). More Accessible Math (The LEAN Math Notation). Proceedings of the 13th International Conference, ICCHP, Linz, Austria.

Landau, S. (2003). Use of the Talking Tactile Tablet in Mathematics Testing. Journal of Visual Impairment and Blindness, 97, 85–96.

Leas, D., Persoon, E., Soiffer, N., & Zacherl, M. (2008). DAISY 3: A Standard for Accessible Multimedia Books. IEEE Multimedia, 15(4), 28–37.

Loetzsch, J. (1994). Computer-Aided Access to Tactile Graphics for the Blind. Proceedings of the 4th International Conference on Computers Helping People, Vienna. 575–581.

Loetzsch, J., & Roedig, G. (1996). Interactive Tactile Media in Training Visually Handicapped People. Proceedings from New Technologies in the Education of the Visually Handicapped. Paris, France.

Parkes, D. (1988). Nomad: an Audio-Tactile Tool for the Acquisition, Use and Management of Spatially Distributed Information by Partially Sighted and Blind Persons. Proceedings of the Second International Symposium on Maps and Graphics for Visually Handicapped People. King's College, University of London. 24 –29.

Parkes, D. (1991). Nomad: Enabling Access to Graphics and Text Based Information for Blind, Visually Impaired and Other Disability Groups. Proceedings of the World Congress on Technology. Arlington, Virginia. 690–714.

Sahyun, S., Bulatov, V., Gardner, J., & Preddy, M. (1998). A How-To Demonstration For Making Tactile Figures And Tactile Formatted Math Using The Tactile Graphics Embosser. Proceedings of the 1998 CSUN International Conference on Technology and Persons with Disabilities. Los Angeles, CA.

Sahyun, S., Gardner, J. & Gardner C. (1998). Audio and Haptic Access to Math and Science - Audio graphs, Triangle, the MathPlus Toolbox, and the Tiger printer. Proceedings of the 15th IFIP World Computer Congress (Computers and Assistive Technology), Vienna.

Soiffer, N. (2005). MathPlayer: web-based math accessibility. Proceedings of the 7th International ACM SIGACCESS conference on Computers and accessibility, ACM. New York, NY.204 –205.

Walsh, P., & Gardner, J. (2001). Tiger, A New Age Of Tactile Text And Graphics. Proceedings of the 2001 CSUN International Conference on Technology and Persons with Disabilities. Los Angeles, CA.